December 15, 2020

17 min read

AWS’ New Machine Learning Services Announced at re:Invent 2020

Author:

Provectus, AI-first consultancy and solutions provider.

AWS re:Invent 2020 is over. Held as a virtual conference for the first time, it did not fall short. Despite the pandemic, the conference was packed with keynotes, leadership sessions, tracks, hands-on activities, and tons of “play” content as usual — all done to convey the message “We are leaders in the cloud space, and we’re not going anywhere, no matter the scale of our competition.”

2020 was a success for Amazon Web Services as a whole, and especially for its AI/ML division. In their keynotes, both Andy Jassy, CEO of AWS, and Swami Sivasubramanian, VP of Amazon AI, did their best to reinforce the image of AWS as a leader in artificial intelligence and machine learning.

And for good reasons:

- In 2020, AWS released 250+ new features for AI and machine learning, complementing its broadest and widest stack of AI/ML services

- In the framework niche, over 90% of cloud-based TensorFlow and PyTorch now runs on AWS

- AWS infrastructure is still hard to rival in terms of high performance, cost-efficiency, and scalability (both hardware- and software-wise)

As an AWS Premier Consulting Partner, Provectus shares our vision of the future with Amazon Web Services. Our common goal is to empower businesses with AI, and we are excited for the opportunity to use AWS’ latest AI/ML services.

At AWS re:Invent, Provectus was named an AWS Launch Partner for AWS Panorama, Amazon Lookout for Vision, and Amazon Lookout for Metrics. This recognition substantiates our expertise in delivering Computer Vision (CV) solutions, and solutions for anomaly, defect, and outlier detection in data. Let’s explore these and other AI/ML services announced at AWS re:Invent 2020 in more detail.

Amazon SageMaker and Tools for Data Scientists

Services for ML engineers and data scientists were the focus of re:Invent 2020. This was the first year that an entire keynote was dedicated to new ML services and AI solutions for business.

Although Amazon SageMaker was in the limelight as usual, other useful services, capabilities, and updates were also announced. One of the first services to hit the stage was AWS Glue Elastic Views.

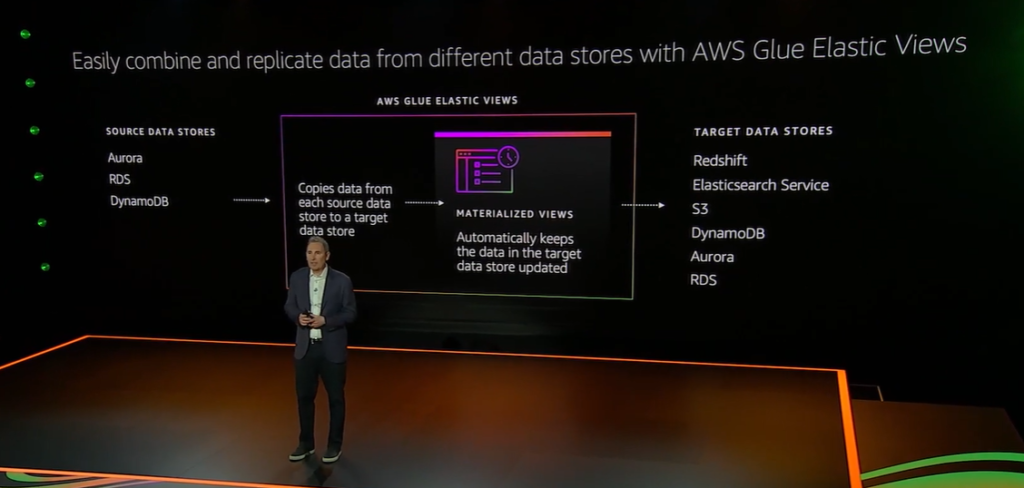

AWS Glue Elastic Views

AWS Glue Elastic Views is a new feature of AWS Glue that enables developers to build materialized views that combine and replicate data across multiple data stores using SQL.

With AWS Glue Elastic Views, developers do not have to spend months writing custom code to start combining data from multiple data stores, including relational and non-relational databases. All they have to do is to use the new feature to access, combine, replicate, and keep their data up-to-date. AWS Glue Elastic Views copies data from a source data store to create a replica in a target data store, allowing for continuously monitoring of changes to data in your source data stores, automatically providing updates to the materialized views in your target data stores, and checking that data accessed through the materialized view is always up-to-date.

AWS Glue Elastic Views supports Amazon DynamoDB, Amazon S3, Amazon Redshift, and Amazon Elasticsearch services, with support for Amazon Relational Database Service, Amazon Aurora, and others to follow. AWS Glue Elastic Views is serverless, and automatically scales capacity up or down, based on demand, so there’s no infrastructure to manage.

Faster Distributed Training on Amazon SageMaker

Amazon SageMaker distributed training is a new feature of Amazon SageMaker that allows ML engineers to train large deep learning models and datasets up to 40% faster. It uses partitioning algorithms to automatically split large deep learning models and training datasets across AWS GPU instances.

SageMaker distributed training combines model parallelism and data parallelism techniques to break down (a) large models into smaller parts that fit on a single GPU, to later distribute them across multiple GPUs for training; and (b) large datasets for concurrent training, to achieve faster training speed. Data parallelism or model parallelism can be added to PyTorch and TensorFlow training scripts and to Amazon SageMaker, with only a few lines of additional code.

The efficiencies of SageMaker distributed training enable IT teams to handle ML use cases such as image classification and text-to-speech faster and more efficiently.

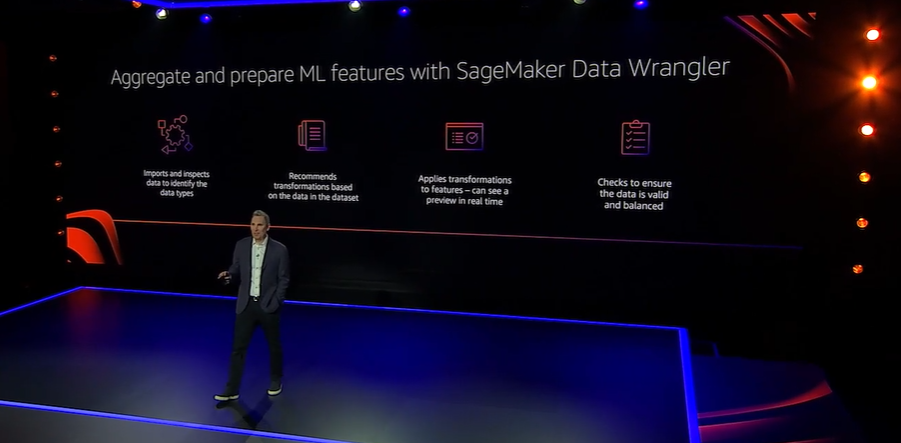

Amazon SageMaker Data Wrangler

Amazon SageMaker Data Wrangler is a new feature of Amazon SageMaker that makes it easier to aggregate and prepare data for machine learning. With it, data scientists can handle data at a much faster clip, reducing the time needed for data preparation from weeks to minutes.

Amazon SageMaker Data Wrangler accelerates data preparation, including data selection, cleansing, exploration, and visualization from a single visual interface. Every step of the workflow can be completed in a few clicks. For example, you can choose data from various data sources and import them with a single click.

SageMaker Data Wrangler facilitates features engineering. It contains over 300 built-in data transformations, to quickly normalize, transform, and combine features without having to write custom code. All transformations can be previewed and inspected in Amazon SageMaker Studio.

Once your data is prepared, you can build fully automated ML workflows with Amazon SageMaker Pipelines and save them for reuse in the Amazon SageMaker Feature Store.

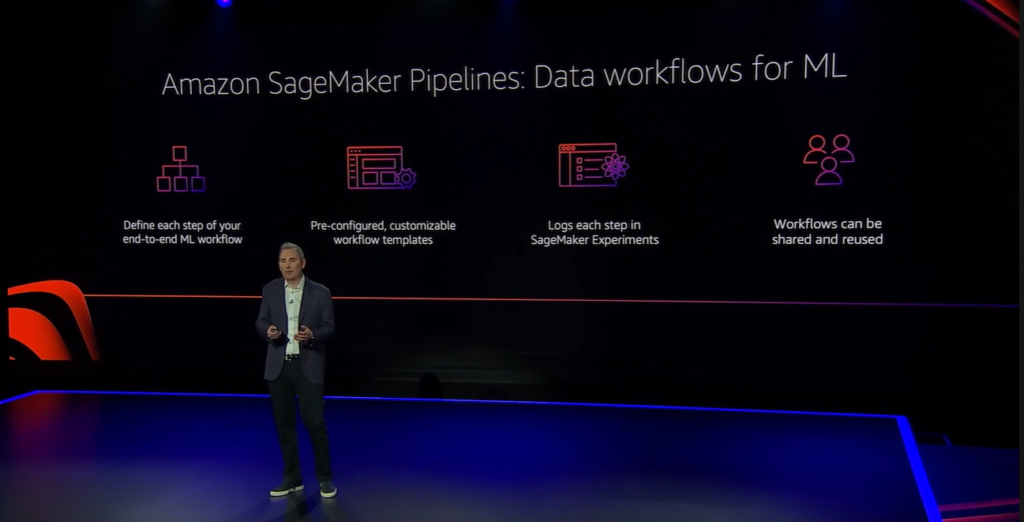

Amazon SageMaker Pipelines

Amazon SageMaker Pipelines is a new feature of Amazon SageMaker that brings continuous integration and continuous delivery practices for machine learning to the AWS AI/ML stack. Built as a fully managed service, SageMaker Pipelines makes it easier to create, automate, and manage end-to-end ML workflows at a larger scale.

SageMaker Pipelines helps automate data loading, data transformation, training and tuning, and deployment. Now, ML engineers do not have to spend months coding to handle every step of the ML workflow.

With SageMaker Pipelines, they can build models faster, process and manage more data, conduct training experiments on an industrial scale, and track multiple model versions to choose the most accurate ones. ML workflows can be shared and re-used across the organization to recreate or optimize models, to drive ML initiatives more efficiently.

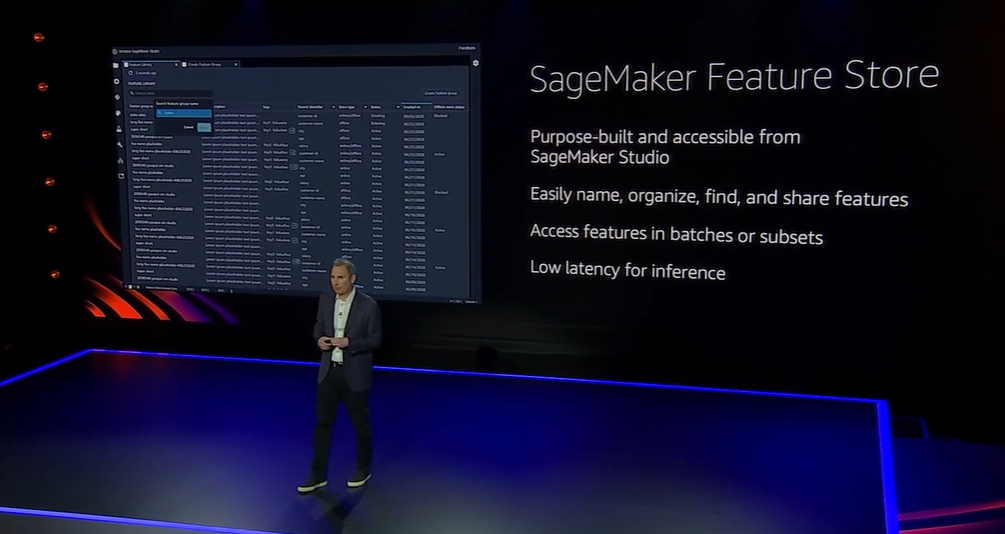

Amazon SageMaker Feature Store

Amazon SageMaker Feature Store is a fully managed, purpose-built repository to store, update, retrieve, and share ML features for real-time and batch ML applications.

A feature store is a key component of the ML stack, because it keeps features consistent and up-to-date across different teams within the organization. By avoiding data silos and unnecessary heavy lifting, they can drive ML processes faster and scale machine learning more efficiently.

Amazon SageMaker Feature Store can be used as a single source of features, meaning teams do not need two separate feature stores — one for training and one for inference. Fully integrated with Amazon SageMaker Pipelines, SageMaker Feature Store makes it easier to add feature search, discovery, and reuse to the ML workflow. Features can be queried in batches or in real time using Amazon Athena.

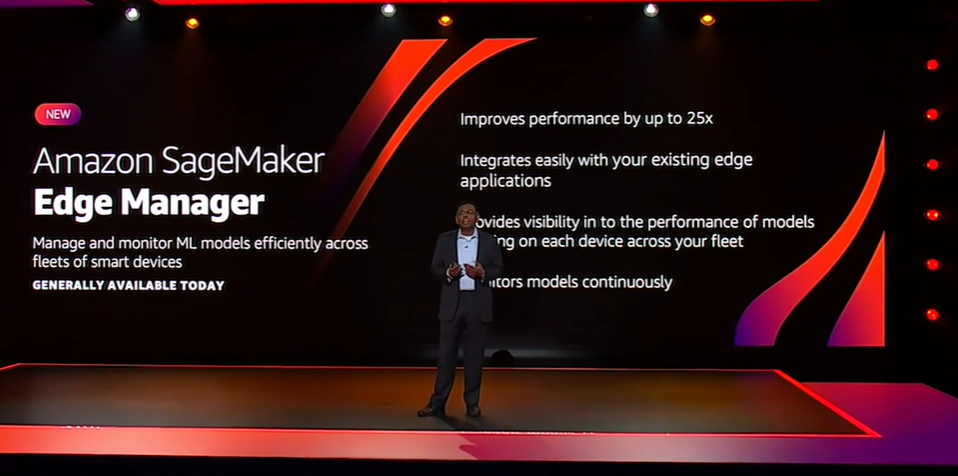

Amazon SageMaker Edge Manager

Amazon SageMaker Edge Manager is a new feature of Amazon SageMaker that makes it easier for developers to manage ML models efficiently across fleets of smart devices. With it, they can optimize, secure, monitor, update, and maintain machine learning models on smart cameras, robots, personal computers, and mobile devices, much faster and easier, and without having to write custom code.

SageMaker Edge Manager will be a useful addition for developers already using Amazon SageMaker Neo to manage ML applications for industrial automation, autonomous vehicles, and automated checkouts. It scales the capabilities of SageMaker Neo, allowing for the management of thousands of deployed models running across fleets of edge devices.

Amazon SageMaker Clarify

Amazon SageMaker Clarify is a new capability of Amazon SageMaker that helps businesses detect bias in models and explain model behavior to customers.

Data bias can show up anywhere throughout the machine learning workflow (data training, data labeling, data selection). Data drift is a serious issue as well.

With Amazon SageMaker Clarify, AWS provides developers with greater visibility of their training data and models, so they can identify and limit bias and explain predictions. The service covers major pain points by detecting potential bias during data preparation, after model training, and after model deployment examining specified attributes. It also allows developers to monitor models for bias in real time.

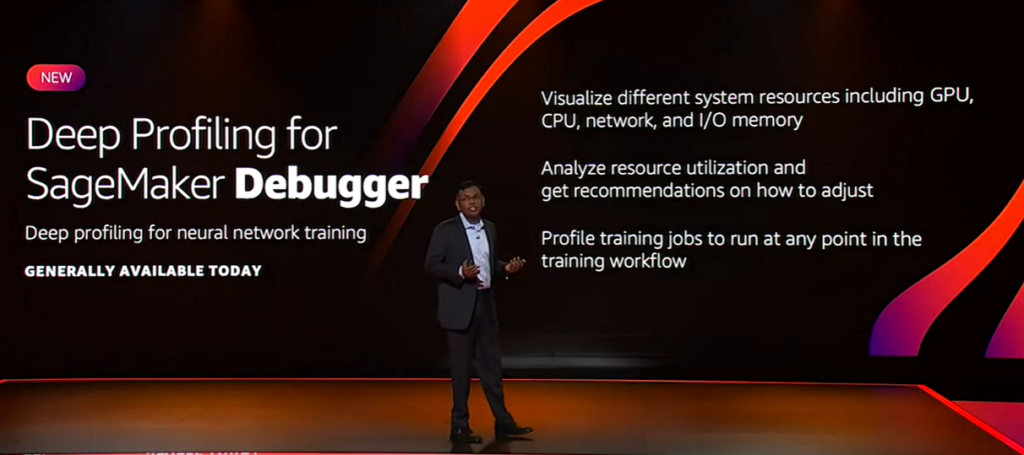

Deep Profiling for SageMaker Debugger

Deep Profiling is a new feature of Amazon SageMaker Debugger that allows developers to profile machine learning models, making it easier to identify and fix training issues caused by hardware resource usage.

Deep profiling enables organizations to maximize available training resources, which is critical as ML models become ever larger and more complex. With its new capabilities, Amazon SageMaker Debugger enables developers to visualize different system resources, including GPU, CPU, network, and I/O memory; analyze resource utilization and get recommendations on how to adjust; and profile training jobs to run at any point in the training workflow.

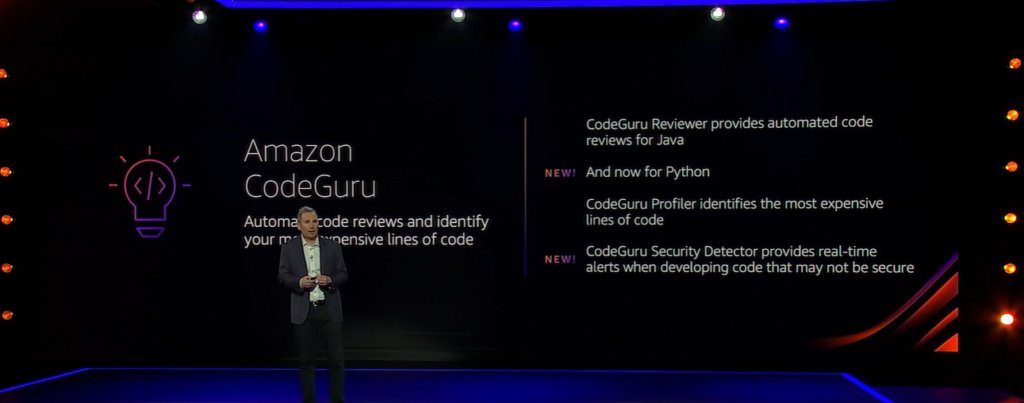

Amazon CodeGuru Reviewer Security Detectors

Amazon CodeGuru Reviewer Security Detectors is a new capability of Amazon CodeGuru Reviewer that makes it easier for developers to find and remediate security issues in their code before deployment. Specifically, it helps identify security risks from Open Web Application Security Project (OWASP) categories, security best practices for AWS APIs, and common Java crypto libraries.

Amazon CodeGuru Reviewer Security Detectors is enabled by machine learning and automated reasoning. AI analyzes data flow to perform whole-program inter-procedural analysis across classes, methods, and files, to detect hard-to-find security vulnerabilities.

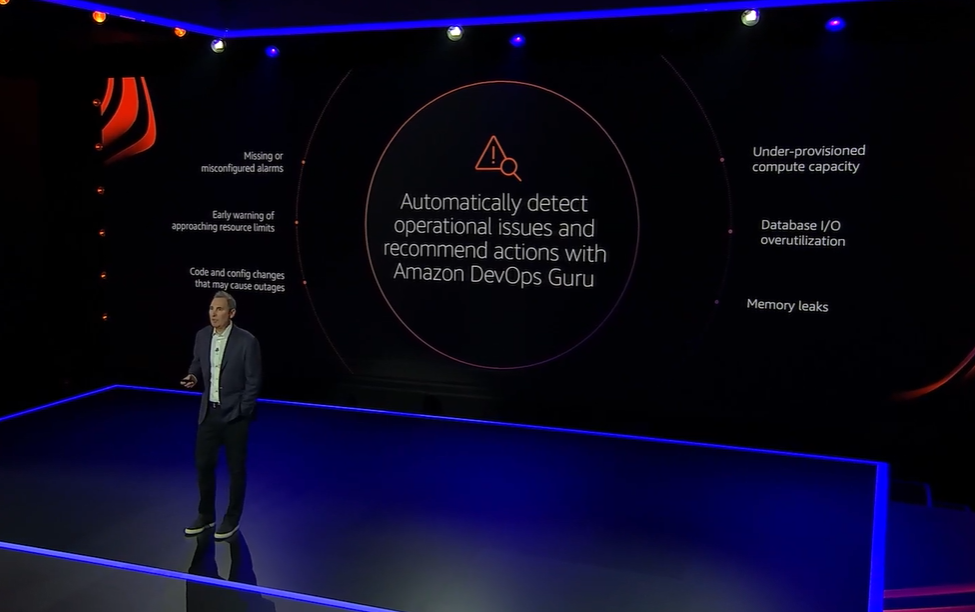

Amazon DevOps Guru

Amazon DevOps Guru is an ML-powered cloud operations service that improves application availability. It provides developers with simple tools to measure and improve the operational performance and availability of their applications while reducing expensive downtime. To start using the service, no machine learning expertise is required.

Using machine learning, Amazon DevOps Guru identifies anomalies in operating patterns. When a critical issue is identified, the service automatically dispatches alerts with a summary of related anomalies, the likely root cause, and context on when and where the issue occurred. In some scenarios, DevOps Guru can also provide prescriptive recommendations on how to fix the issue.

Amazon Redshift ML

Amazon Redshift is one of the most widely used cloud data warehouses. It was only a matter of time before AWS decided to reinforce it with machine learning, to release Amazon Redshift ML.

Amazon Redshift ML is a new machine learning service that enables data analysts, database developers, and data scientists to leverage Amazon SageMaker to create, train, and deploy ML models using SQL commands. In other words, data warehouse users do not need to move their data or have any machine learning expertise to create their own machine learning models for a variety of business use cases and applications, from churn prediction and product personalization to fraud detection.

Amazon Redshift ML is integrated with Amazon SageMaker Autopilot to automatically discover and fine tune the best model, based on the training data.

Amazon Neptune ML

Amazon Neptune ML is a new capability of Amazon Neptune that enables easy, fast, and 50% more accurate predictions for graph applications. It empowers professionals without expertise in ML to use Graph Neural Networks (GNNs) to improve prediction accuracy in such use cases as knowledge graphs, product recommendations, fraud detection, customer retention and acquisition.

The service enables users to automatically select and train the best model for graph data. It lets them run ML on their graphs using Neptune APIs and queries. As a result, machine learning for Amazon Neptune data can be created, trained, and applied in hours instead of weeks.

Industrial & Healthcare AI

During his keynote, Andy Jassy gave more than a few cues that AWS has revamped its approach to the market for AI and machine learning. It seems the company will now prioritize delivering solutions that solve real business problems, end to end, rather than churning out multiple ML services just for “builders.” This year the focus was on Industrial AI & Healthcare AI solutions.

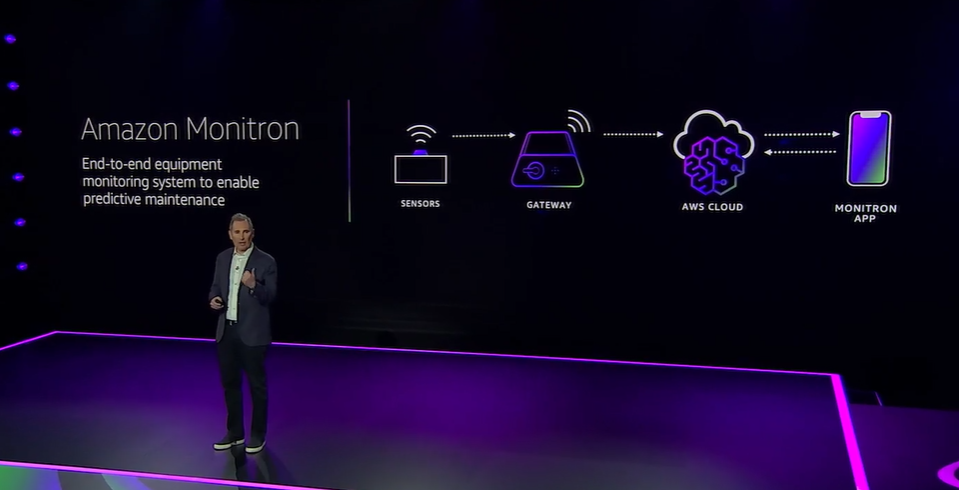

Amazon Monitron

Amazon Monitron is an end-to-end ML system for equipment maintenance. The solution allows manufacturers to apply machine learning to detect abnormal behavior in industrial machinery, implement predictive maintenance, and reduce unplanned downtime.

It consists of IoT sensors to capture such data as vibration and temperature, a gateway to automatically transfer data to the cloud, a machine learning cloud service to detect abnormal equipment patterns, and a companion mobile app to set up the devices and to monitor equipment behavior in real time.

Amazon Monitron enables manufacturers to start tracking the health of equipment in minutes, with no machine learning expertise needed. A technology similar to Amazon Monitron is used to monitor equipment in Amazon Fulfillment Centers.

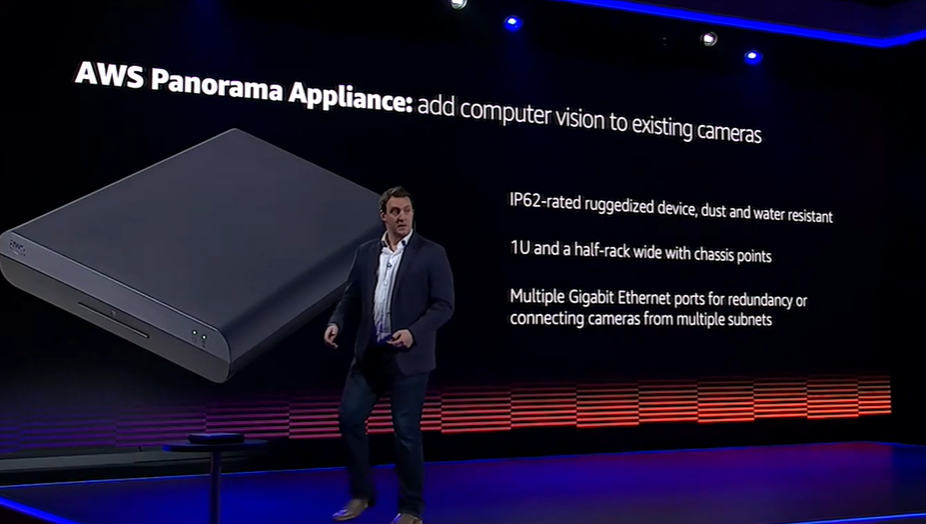

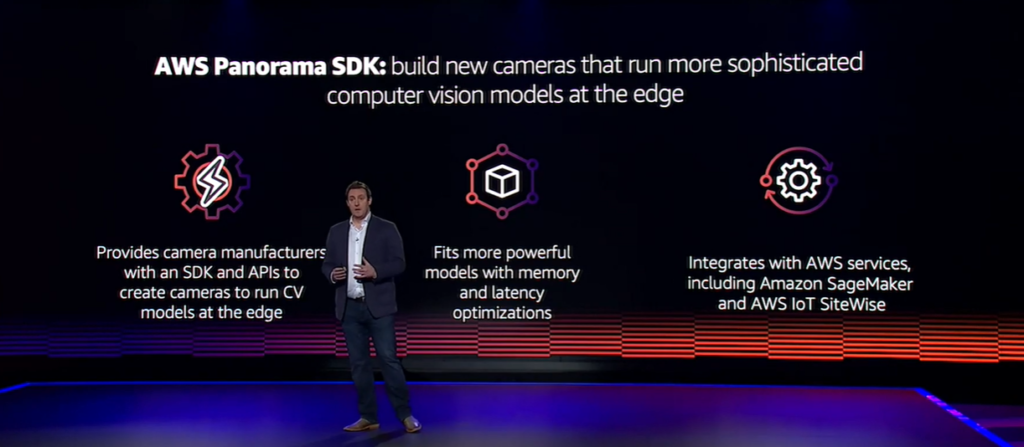

AWS Panorama

AWS Panorama is a new machine learning appliance and SDK for computer vision on the edge. The service makes it easier for organizations to start using computer vision on their on-premises cameras, without obligating them to stream video to the cloud. Highly accurate, low latency predictions are made on-site in the AWS Panorama Appliance.

By using AWS Panorama, manufacturers can improve operational efficiency with automated monitoring and visual inspection tasks like quality inspection, worker safety, and analysis of industrial processes, with limited internet connectivity.

The AWS Panorama Appliance is a hardware device that can be installed and connected to existing cameras in the network, to run computer vision models on multiple concurrent video streams with the AWS Panorama Device SDK.

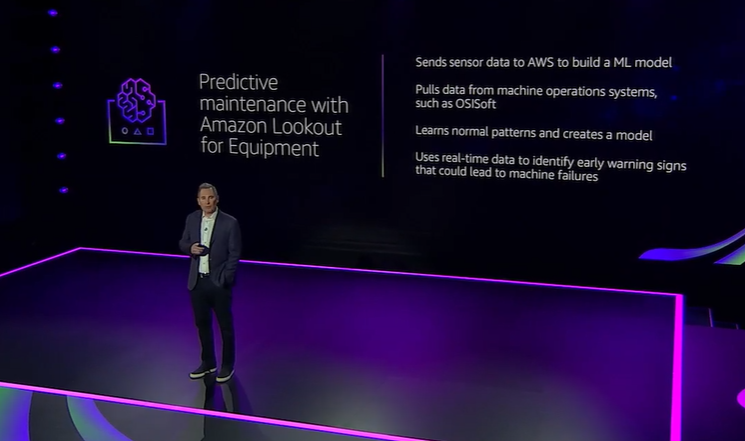

Amazon Lookout for Equipment

Amazon Lookout for Equipment enables manufacturers to use their existing sensors to make the most of the data they collect, to detect abnormal equipment behavior with AI and advanced analytics.

The service provides a way to send sensor data to the AWS cloud, where it is used to build machine learning models that return predictions and help take action before machine failures occur, avoiding unplanned downtime. In other words, it enables predictive maintenance for industrial machinery.

Amazon Lookout for Equipment analyzes such sensor data as pressure, flow rate, RPMs, temperature, and power to automatically train specific ML models for a customer’s equipment, with no ML expertise required.

Amazon Lookout for Vision

Amazon Lookout for Vision is a new machine learning service for quality inspection. It utilizes computer vision and anomaly detection to make it easier for manufacturers to find visual defects in industrial products at scale.

Lookout for Vision automates real-time visual inspection for processes like quality control and defect assessment, with no machine learning expertise required. The most popular use cases include detecting missing components in products, identifying damage to vehicles or structures, and spotting irregularities in production lines.

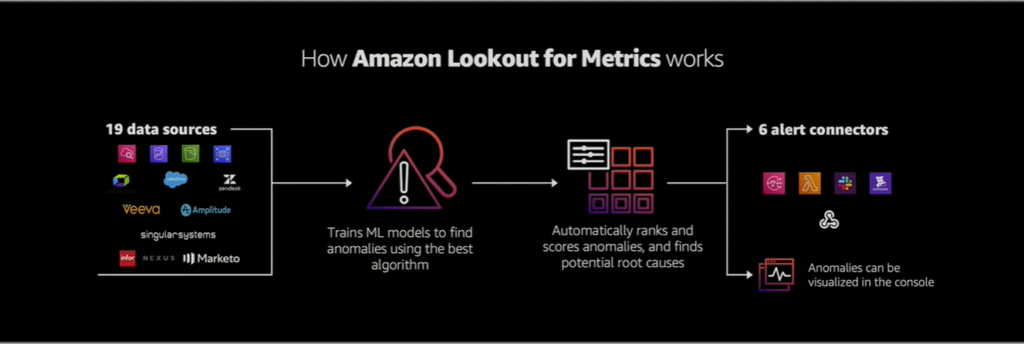

Amazon Lookout for Metrics

Amazon Lookout for Metrics is a new anomaly detection service that applies machine learning to automatically detect and diagnose outliers in business and operational time series data. It can identify unexpected changes in metrics more accurately than traditional rule-based methods, helping customers reduce false alarms while proactively monitoring the health of the business.

Amazon Lookout for Metrics groups related anomalies together, ranks them by severity, and highlights potential root causes of anomalies. It sends alerts through various channels to prompt immediate action on the detected anomaly. It also uses human feedback on detected anomalies to automatically tune the results and continuously improve accuracy.

Amazon HealthLake

Amazon HealthLake is a HIPAA-eligible, machine learning-enabled service for healthcare providers, health insurers and pharmaceutical companies that makes it easier to handle health data at scale.

Because health data is often incomplete, inconsistent, and stored across various formats and systems, it has always been challenging to make sense of it, and then use it to improve clinical decision making, provide better patient support, and design more efficient clinical trials.

Amazon HealthLake organizes, indexes, and structures patient information using specialized machine learning models. Once available, the data can be queried and analyzed in a secure, compliant, and auditable manner. The service can be integrated with Amazon QuickSight and Amazon SageMaker for more advanced ML capabilities.

Amazon QuickSight Q and Evolution of Call Center with Amazon Connect

At re:Invent, speakers repeatedly mentioned COVID-19 and sighted the impact of the pandemic on business. Forced to increase efficiencies, companies turned to AI, analytics, and automation. Amazon QuickSight Q and Amazon Connect’s new ML services are welcome additions to help businesses make the most of their data, communicate better with their customers, and reduce the volume of manual tasks that require a human presence in office spaces.

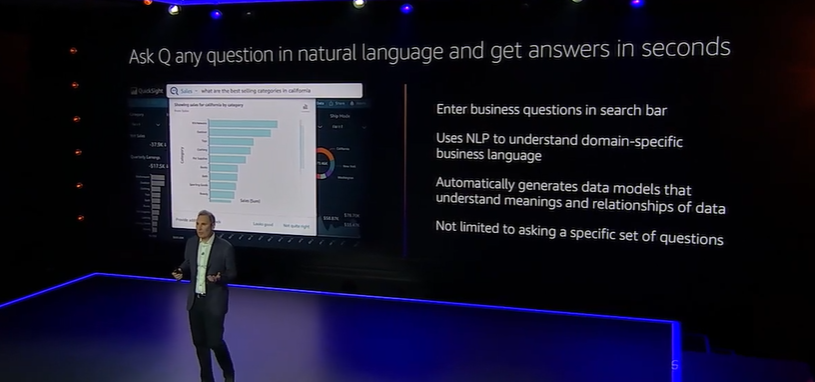

Amazon QuickSight Q

Amazon QuickSight Q is a new capability of Amazon QuickSight that utilizes machine learning-powered natural language capabilities to enable business users to ask questions about their data using everyday business language.

With QuickSight Q, business leaders no longer have to ask their BI teams to look for answers in data dashboards; they simply type their questions into the search bar. Instead of waiting days or even weeks, they get answers in seconds.

Q uses NLP and schema understanding to identify users’ intent and the meaning of the underlying data, to generate correct answers. It comes pre-trained on data from various industries (e.g. sales, marketing, operations, retail) and continuously improves over time by learning from user interactions with human-in-the-loop.

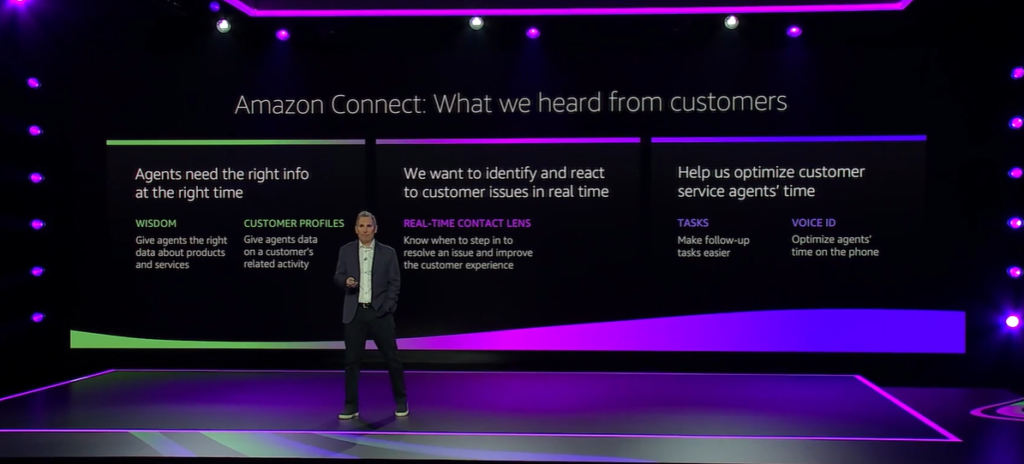

Amazon Connect

Amazon Connect, an omnichannel cloud contact center powered by machine learning, was released back in 2017. This year, five new features and capabilities were added to the pool, to help businesses provide superior customer service at a lower cost.

- Amazon Connect Wisdom. This ML-powered service enables businesses to reduce the time agents spend searching for answers by querying across databases and repositories. Because machine learning allows agents to use phrases and questions exactly as the customer would ask them, answers are found quickly and without silos. When used with Contact Lens for Amazon Connect, the service leverages real-time analytics to automatically detect customer issues during calls, then recommends information in real time to help resolve the issue, eliminating the need for an agent to search manually.

- Amazon Connect Customer Profiles. This service leverages machine learning to enable contact center agents to access and analyze customer profile data. It automatically aggregates customer information from multiple applications (Salesforce, ServiceNow, Zendesk, and Marketo), scans and matches customer records based on unique identifiers to surface a unified customer profile, and delivers it to agents at the beginning of the customer interaction. With a more unified view of a customer’s current situation, the agents can provide more personalized customer service.

- Real-Time Contact Lens for Amazon Connect. Launched at re:Invent 2019, Contact Lens for Amazon Connect uses machine learning to analyze call recordings, to figure out customer sentiment and trends, and to check if the conversations are compliant. With Real-Time Contact Lens released this year, contact center supervisors can take advantage of real-time call analytics, to detect customer issues during live calls and resolve them faster. The service takes into consideration speech patterns, volume levels, and specific language to figure out if a customer is dissatisfied. It then pushes real-time alerts to the Connect real-time metrics dashboard for supervisors to review.

- Amazon Connect Tasks. This ML service enables businesses to efficiently prioritize, assign, and track customer service tasks across applications used by customer support agents. It provides a single place for agents to receive calls, chats, and customer service tasks. Repetitive tasks can be automated and their status monitored using real-time metrics. The service provides pre-built integrations with CRM applications and APIs, to easily integrate with in-house and business-specific applications. Amazon Connect Tasks improves agent productivity and lowers costs through better task management.

- Amazon Connect Voice ID. This service provides real-time caller authentication for support agents to communicate with customers in a more secure and efficient manner. With Voice ID, agents can identify customers without asking such questions such as birthdate and mother’s maiden name. Voice ID uses machine learning to analyze the caller’s voice characteristics like speech rhythm, pitch, intonation, and volume, and creates a unique digital voiceprint. When the same customer calls again, the service compares the caller’s voiceprint with the enrollment voiceprint and then provides a real-time result of “authenticated” or “not authenticated.” If the caller does not meet the minimum authentication score, support agents can authenticate them by conventional means.

What’s Next?

AWS re:Invent 2020 was saturated with announcements in the space of AI and machine learning. The cloud provider has released over 30 new ML/AI services, features, and capabilities.

Reflecting on the results of the event, it is clear that AWS is becoming more business-oriented, to keep up with its competitors at Google (innovative startups) and Microsoft (enterprise clients). However, the company has not forgotten its prime segment: builders. This year new tools, inferences, and chips have been added to the AWS roster at an unprecedented rate. This pace of innovation is in many ways possible thanks to AI and machine learning, which have basically, created a self-perpetuating innovation ecosystem.

At Provectus, we have been carefully following re:Invent announcements and updates. We are excited about the possibilities ahead, and we look forward to trying AWS’ new machine learning services to drive value for our clients’ businesses.