12 min read

Pr3vent Uses Machine Learning on AWS to Combat Preventable Vision Loss in Infants

Author:

Provectus, AI-first consultancy and solutions provider.

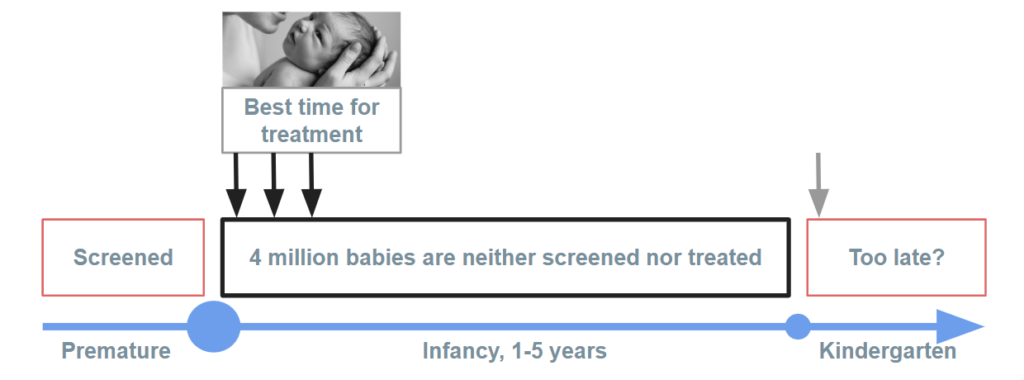

The best time to detect and treat preventable eye conditions is within the early months of a newborn’s life.

And yet, the eyes of millions of babies across the United States go unscreened since proficient ophthalmologists simply don’t have the capacity to examine every newborn’s retina.

Doctors capable of accurately diagnosing eye conditions in infants are rare, and they’re often overwhelmed by large volumes of manual work, making them inaccessible to millions of patients who need them most.

Scaling doctors’ expertise through artificial intelligence (AI) and machine learning (ML) provides an affordable and accurate solution, giving millions of infants equal access to eye screening.

In this post, we’ll explore how Pr3vent — a medical AI company founded by ophthalmologists—teamed up with AWS Machine Learning Competency Partner Provectus to develop an advanced disease screening solution powered by deep learning that detects pathology and signs of possible abnormalities in the retinas of newborns.

The solution has shown 96 percent accuracy in anomaly detection, dramatically reducing the manual workload of ophthalmologists. By scaling doctors’ expertise through innovative computer-aided diagnosis, Pr3vent has improved patient diagnosis, reduced cost per screening, and expanded the availability of eye screening to newborn infants across the U.S.

Building a Disease Screening Solution for Doctors

Screening at birth reveals pathologies in the first months of an infant’s life, giving parents more time to find experts to evaluate, treat, and prevent the progression of disease.

It’s estimated that more accessible and affordable eye screening could prevent vision impairment or loss for four million babies in the U.S. per year.

Pr3vent attracted qualified and experienced ophthalmologists to label and validate 350,000 fundus and retina images, which required 35,000 infants to be screened. The volume of the database ensured broad variability of screened cases, which, combined with labeling by expert ophthalmologists, was a key factor in training of a highly accurate ML model — a key component of the proposed disease screening solution for ophthalmologists.

Pr3vent also had to build an AI-driven engine for image analysis and anomaly detection that was capable of detecting signs of more than 50 pathologies in eye screens uploaded to the application by doctors.

These complex tasks prompted Pr3vent to join forces with Provectus, an AWS Partner Network (APN) Premier Consulting Partner with AWS Competencies in ML, Data & Analytics, and DevOps. An AI-first technology consultancy and solutions provider, Provectus helps customers design, architect, migrate, or build cloud-native applications on Amazon Web Services.

To deliver an advanced and user-friendly eye screening solution for Pr3vent, the team at Provectus developed the AI solution in three stages:

- Image labeling infrastructure

- Machine learning model building and training infrastructure

- Disease detection and diagnosis application

Below, I’ll explain how the team approached each of these stages, as well as provide a more detailed overview of the infrastructure for machine learning we designed and built for Pr3vent.

Image Labeling Infrastructure

Pr3vent has exclusive access to a database of more than 350,000 retina and fundus images of newborns. They also had the good fortune to attract some of the best ophthalmologists in the U.S. to label the training dataset.

However, they still needed a solution to speed up the labeling and make it more efficient than standard image-labeling tools allowed.

As the first step, Provectus built an image labeling infrastructure designed for ophthalmologists. It facilitated and accelerated the labeling of medical images by 4x, enabling doctors to process up to 72 eye screens per minute (12 screens per patient). Faster labeling saved Pr3vent dozens of hours of ophthalmologists’ precious time.

Since data quality is paramount in machine learning, the infrastructure for image labeling was designed to eliminate any signs of bias. It also had to ensure objectivity of the training dataset, thereby increasing accuracy of the final ML model.

Machine Learning Infrastructure for Model Building and Training

In addition to labeling and data quality, underlying infrastructure also impacts the accuracy of models. ML infrastructure allows to manage the models’ results in a transparent and well-monitored environment and to improve them on new, labeled and consistent data. Pr3vent is a medical AI company, and it’s critical to ensure that its ML model is able to adapt and improve in real-world use.

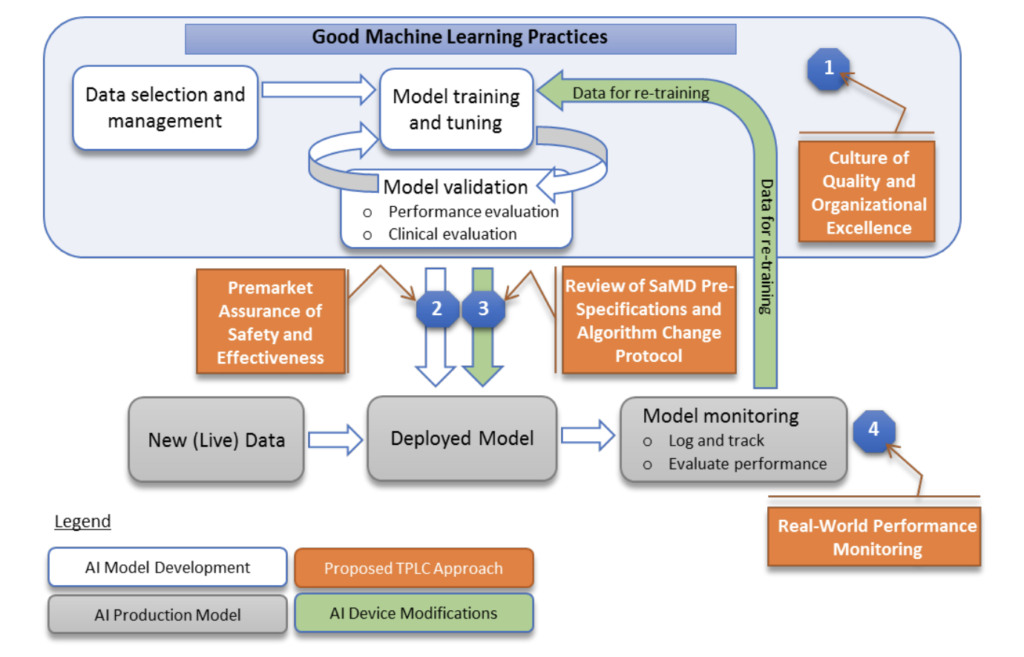

The importance of ML infrastructure for companies looking to implement AI solutions in healthcare is highlighted by the FDA. According to the regulator:

[ML infrastructure] provides reasonable assurance of safety and effectiveness throughout the lifecycle of the organization and products so that patients, caregivers, healthcare professionals and other users have assurance of the safety and quality of those products. [It] enables the evaluation and monitoring of a software product from its premarket development to post market performance, along with continued demonstration of the organization’s excellence.

In the image below, you see an example of a high-level architecture from FDA, for the AI/ML-based SaMD.

For Pr3vent, Provectus built an advanced machine learning infrastructure following FDA’s best practices for safety and efficiency. It features fully auditable labeling, dataset management, model training, model evaluation, model release management, model inferencing, prediction explainability, and model monitoring components. The infrastructure is integrated into an end-to-end AI platform for healthcare.

To build the infrastructure, a variety of AWS services were used, including Amazon Simple Storage Service (Amazon S3), AWS Glue, Amazon Elastic Kubernetes Service (Amazon EKS), and Amazon SageMaker. Additionally, PyTorch, Petastorm, and Provectus Machine Learning Infrastructure were utilized.

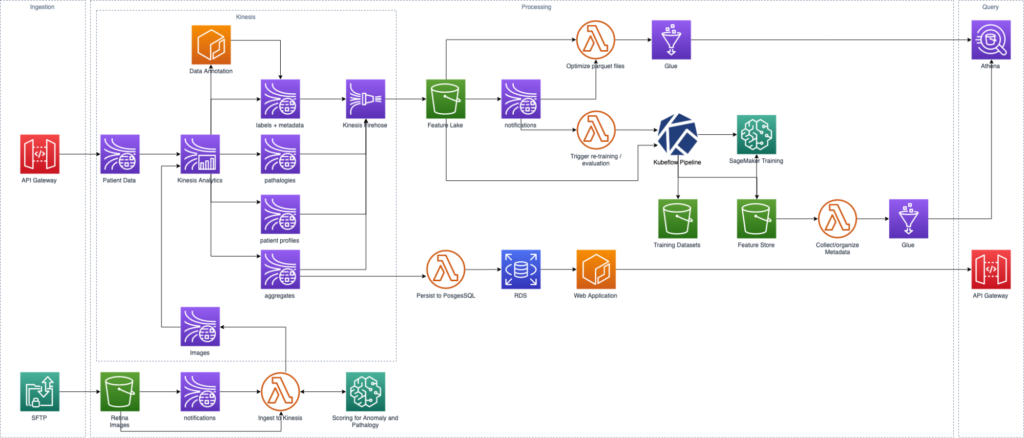

Figure 4 shows a high-level diagram of the system’s entire pipeline.

Here’s how the Provectus solution utilized AWS services:

- Amazon S3: is used for storing Images, Features, Models, Experiments results and any other type of produced data

- Amazon SageMaker: is used for conducting experiments, running training task for the model and for the model’s inference

- Amazon Lambda: is used for processing of rare, simple events

- Amazon RDS: is used as a simple good storage of web application data

- Amazon Kinesis Data Stream: is used as a primary tool for transmitting information, such as continuous flow

- Amazon EKS: is used for hosting internal services like Data preparation service and the web application

- Kinesis Firehose, Glue and Athena: are used for loading streaming data and aggregating these data points and investigations

- API Gateway: is used as a front door for web application and data ingestion

Disease Detection and Diagnosis Application

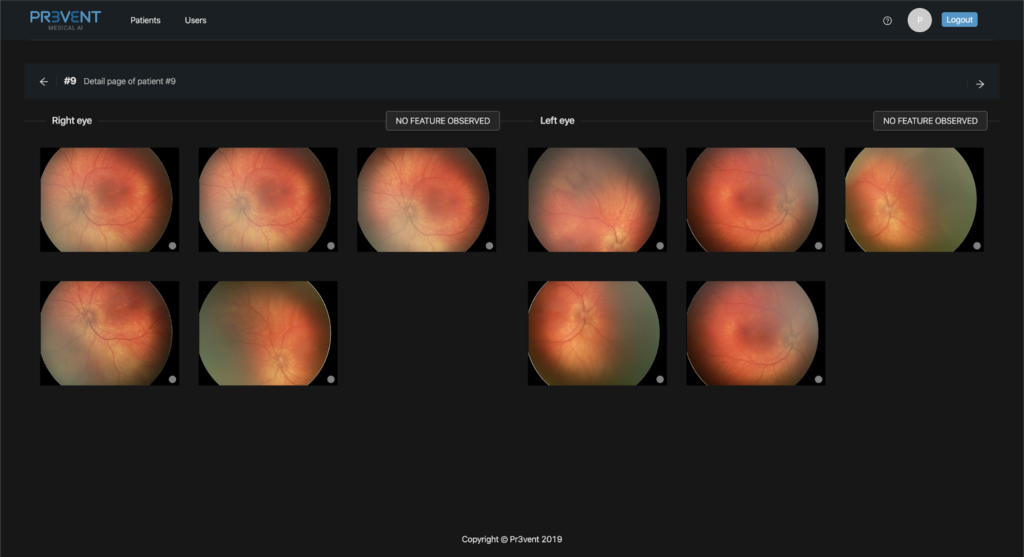

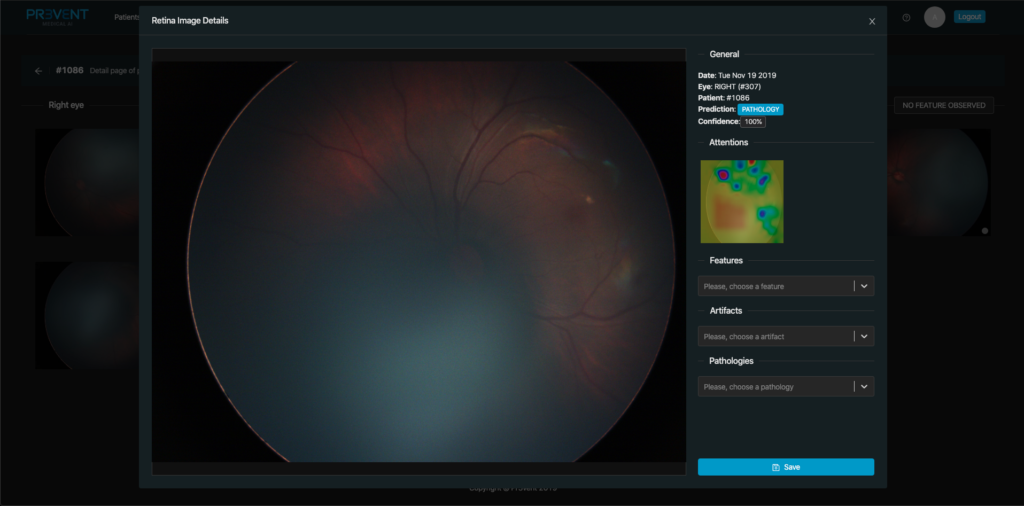

Eye screening is performed in the main application—the Disease Detection and Diagnosis app. Here, doctors examine screening results to check the accuracy of pathology detection and classification by machine learning.

In Figure 5 above, you see the fields that doctors use for markup. These fields are the following:

- Label

- Title and version of the ML model

- Prediction result

- Prediction accuracy in %

- Explainability, with pathology areas highlighted in the image

The Disease Detection and Diagnosis app is used by ophthalmologists as an interface to display all the screened images. Images can be sorted to find those with detected anomalies.

To simplify and speed up diagnosis for specific conditions, the images feature the explainability section where doctors can scrutinize and examine signs of abnormalities in more detail.

The application allows doctors to focus on patients who require immediate assistance. This can save doctors time looking through dozens of normal images of healthy retinas with no signs of pathology.

Building a Machine Learning Infrastructure for Medical AI

The infrastructure that Provectus designed and built for Pr3vent’s computer vision-powered eye screening solution is, fundamentally, an end-to-end platform for deploying production ML pipelines, with a highly accurate ML model on top.

It has the capability to train and retrain the ML model on new incoming data, and gradually improve screening accuracy of the solution.

It can also validate recently created ML models and compare with older versions to ensure the most robust model is deployed in production. This is a critical advantage for deep neural network models that rely on freezing layers to update weights once all training tests are completed.

Here are the steps we took to build the solution for Pr3vent:

Convenient and Scalable Model Training

Provectus moved forward to deliver a secure and reproducible experimentation and ML training infrastructure, where data is stored in Amazon S3, pipelines are orchestrated and ML models are trained in Kubeflow. Some of the ML models, as well as analytics, were done in Amazon SageMaker. By doing so, we achieved accurate and trackable data versioning, and accessed Catalyst and Lightning for fast prototyping and efficient results reproducibility.

Using Amazon SageMaker Training Jobs, you can launch several model training algorithms and later review and examine the results. Thereafter, you can also review a trained model using Hyperparameter tuning in Amazon SageMaker.

To keep the training algorithms organized, a separate folder was allocated to each, with the following structure.

model-name/

model-name/main.py

model-name/requirements.txt

```python

import sagemaker

from sagemaker.pytorch import PyTorch

sagemaker_session = sagemaker.Session()

role = sagemaker.get_execution_role()

estimator = PyTorch(

source_dir='./model-name',

entry_point='main.py',

role=role,

framework_version='1.2.0',

train_instance_count=2,

train_instance_type='ml.p3.2xlarge',

hyperparameters={

'epochs': 20,

'lr': 0.001,

'optim': 'Adam'

})

```

Seed is attached to Main file; Experiment is defined using Catalyst terms.

```python

from catalyst.dl import SupervisedRunner

from catalyst.dl.callbacks import EarlyStoppingCallback

from catalyst.utils import set_global_seed, prepare_cudnn

set_global_seed(SEED)

prepare_cudnn(deterministic=True)

runner = SupervisedRunner()

runner.train(

model=model,

criterion=criterion,

optimizer=optimizer,

scheduler=scheduler,

loaders=loaders,

fp16=fp16,

logdir=logdir,

num_epochs=num_epochs,

callbacks=[

catalyst_utils.PrecisionCallback(),

catalyst_utils.RecallCallback(),

catalyst_utils.F1ScoreCallback(),

catalyst_utils.AccuracyCallback(),

EarlyStoppingCallback(**early_stop_kwargs),

catalyst_utils.MLFlowCallback(),

],

verbose=verbose,

check=check

)

```

Then, it’s simple to launch Experiment.

```python

experiment = Experiment(model, *args, **kwargs)

experiment.run(loaders, check=False, verbose=True)

```

Using wrapping as such, it’s possible to quickly check and try multiple models, with underlying algorithms.

Initially, we used MLflow for model comparison. Thanks to convenient callbacks in Catalyst, integrations were simple to perform and complete. In that logic, we pushed all metrics to MLflow.

```python

class MLFlowCallback(Callback):

def __init__(

self,

input_key: str = 'targets',

output_key: str = 'logits',

):

self.input_key = input_key

self.output_key = output_key

def on_batch_end(self, state: RunnerState):

batch_values = state.metrics.batch_values

for key, value in batch_values.items():

mlflow.log_metric(key, value)

def on_epoch_end(self, state: RunnerState):

epoch_values = state.metrics.epoch_values

for key, values in epoch_values.items():

for k, v in values.items():

mlflow.log_metric('/'.join([key, k]), v)

```

The second constituent of main.py is basically a template you can check out here, at AWS Labs.

Reduction of Model Training Costs

Amazon SageMaker allows working with data as file or pipe. The PyTorch framework, however, supports file only. Given that, data is always copied to a training instance in its entirety.

Because Pr3vent stores 350,000 retina and fundus images of newborns, we needed to find a way to limit the number of access requests to data in Amazon S3, to reduce utilization costs. For that purpose, the data was compiled into several larger files with Uber’s Petastorm framework.

Though the data was modified and transformed along the way, format-wise it was stored as follows.

```python

Pr3ventSchema = Unischema('Pr3ventSchema', [

UnischemaField('uuid', np.int32, (), ScalarCodec(IntegerType()), False),

UnischemaField('image_id', np.int32, (), ScalarCodec(IntegerType()), False),

UnischemaField('image', np.uint8, (480, 640, 3), CompressedImageCodec('png'), False),

UnischemaField('eye', np.int32, (), ScalarCodec(IntegerType()), False),

UnischemaField('label', np.int32, (), ScalarCodec(IntegerType()), False),

])

```

Model Comparison

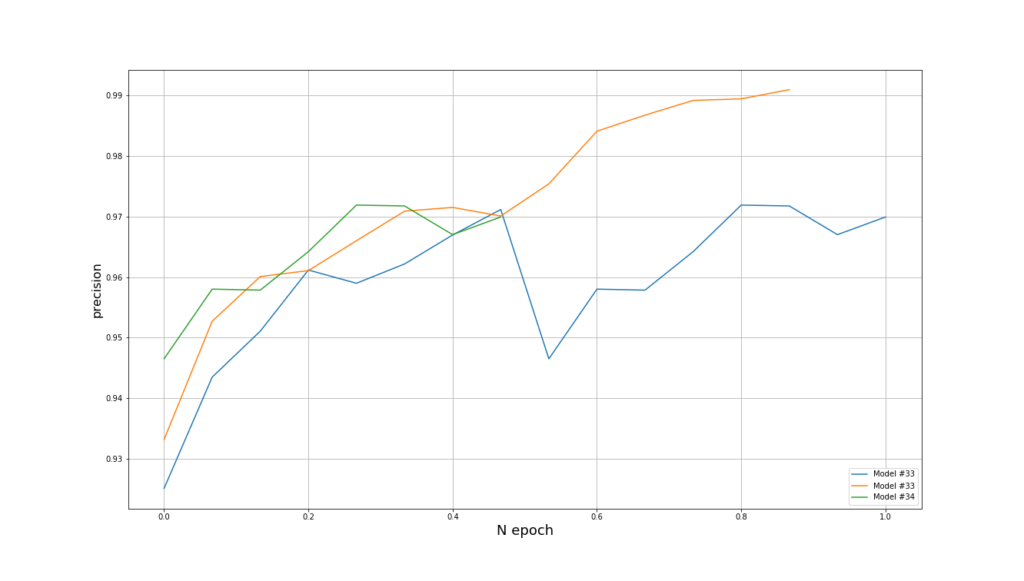

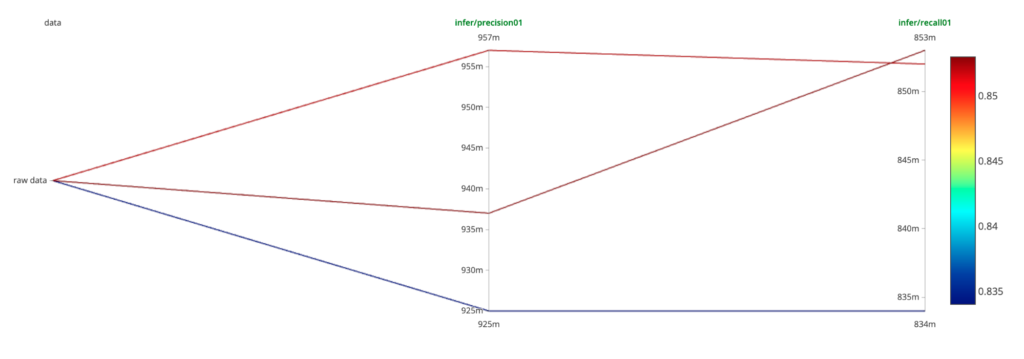

To ensure efficient comparison of models, a two-stage process was used.

As step one, models built on existing data can be compared offline. Jenkins pulls model metrics from Amazon S3 to compare them using MLflow.

As step two, model comparison can be done online using real-time streaming data. To test the models online, a model’s inference was started, with all traffic duplicated to a new model. We could follow the metrics in real-time.

In that, anomaly detection algorithms were applied. We analyzed the models for anomaly and, after several iterations, replaced the older model with a new one.

Summary

The ML-powered eye screening solution developed by Provectus detects pathology in a newborn’s retina and fundus images with high accuracy, and at scale. The final ML model demonstrates 85 percent recall and 95.7% precision.

Given the high accuracy of anomaly detection in the solution, Pr3vent could revolutionize the way eye conditions are diagnosed and treated. Rather than scheduling an eye exam at an ophthalmologist’s office, babies’ eyes can be screened immediately after birth by medical personnel using a high-precision portable camera. The images are then uploaded to the Pr3vent app and read with machine learning to detect any signs of abnormalities in the fundus. If anomalies are detected, a visit to an eye doctor is arranged.

Pr3vent can free ophthalmologists from the unnecessary manual work of viewing thousands of healthy eye screening images. A doctor can simply access the Pr3vent app, sort out images with anomalies, and examine those images to make a specific diagnosis. By focusing on only those patients who need help, doctors can reduce their manual workload, increase their availability and provide a much faster diagnosis.

Given that up to 97% of eye conditions can be successfully treated in the first few months of life, Pr3vent may significantly reduce vision impairment and vision loss in adulthood for millions of infants worldwide.

Pr3vent is making massive strides to champion equal access to eye screening for millions. By scaling ophthalmologists’ expertise through automation and machine learning, the Pr3vent app is expected to reduce the cost per screening by 10x.

The ultimate combination of application availability, accurate anomaly detection and ease of access for doctors sets the Pr3vent application apart as a premier solution for vision screening. By making eye screening at birth accessible to all, it is projected that Americans could save up to $3 billion per year on the treatment and support of individuals suffering from vision loss and impairment. With the nation’s health at stake, Pr3vent offers a state-of-the-art tool to combat vision loss with AI.

Explore Machine Learning Infrastructure, designed and built by Provectus, and learn more about our exclusive ML Infrastructure Acceleration Program for businesses looking to embark on the AI journey.

Provectus — APN Partner Spotlight

Provectus is an AWS Premier Consulting Partner. An Artificial Intelligence consultancy and solutions provider, Provectus helps companies in Healthcare & Life Sciences, Retail & CPG, Media & Entertainment, Manufacturing, and Internet businesses achieve their objectives through AI. Provectus holds AWS competencies in Machine Learning, Data & Analytics, and DevOps.